Fusaka in Action: What the Latest Ethereum Upgrade Means for L2s, Nodes, and Users

Ethereum mainnet has completed the Fusaka fork. From the protocol level, this upgrade mainly consists of four components, OKX _Ventures/status/1999424859709788413">full text with core viewpoints and first-hand experience from three guests in a Q&A format:

-

Ahmad (@smartprogrammer) – Nethermind Execution Client / Ethereum Core Developer

-

Manu (@manunalepa) – Prysm / Offchain Labs Ethereum Core Developer

-

Sarah (@schwartzswartz) – zkSync Developer Relations

-

Host: Esme (@esmeeezy) – OKX Ventures Investment Manager

Table 1: Overview of Fusaka's Four Core Changes

Module

Specific Change

Direct Effect

Peer DAS

Node-collaborative data availability sampling; blobs are split into 128 columns for distributed hosting

Reduces per-node DA load, laying the foundation for future scaling

EIP-7918

Fixes the long-standing incorrect pricing of blob basefee at 1 wei

Brings blob fees back to a normal range with meaningful price signals

P-256 Precompile

Introduces P-256 elliptic curve precompile (required for FIDO2 / WebAuthn)

Paves the way for seed-phrase-less / passkey wallets

BPO (Blob Parameter Only)

A series of small forks that only adjust blob parameters (target / max blobs adjustments)

Gradually increases DA capacity through multiple small upgrades

Q1 – Over the Next 2–4 Weeks, What Visible Changes Will Happen On-Chain?

Most of Fusaka's changes are at the底层 (Peer DAS, gas parameters, blob mechanisms). The short-term impact on three types of participants can be summarized as (see Table 2):

Table 2: Short-Term Visible Changes for Three Types of Participants

Participant

Dimension to Watch

Expected Changes in 2–4 Weeks Post-Fusaka

Guest Highlights

L1 Users

L1 fees / latency

Regular trading gas decreases slightly, extreme spikes reduced

Main driver is gas limit pre-raised from 45M → 60M, not Peer DAS taking direct effect

Node Operators

CPU / bandwidth / disk, stability

Regular nodes have friendlier daily load; large nodes gradually take on more DA responsibilities

Peer DAS is live but blob limit deliberately unchanged; load structure quietly rearranges first

Rollup / L2

DA costs / fee curves / strategy

DA cost curve is smoother; extreme spike frequency and magnitude decrease

Real volume increase waits for future BPO to raise blob limits

Ahmad (Nethermind): L1 Gets Quieter; Real Scaling Left to BPO

Gas limit increase already landed beforehand Before Fusaka, proposers had already raised L1 gas limit from 45M to 60M , completing the consensus parameter change via proposer vote:

More transactions per block

L1 basefee drops on average; regular transfers become cheaper

Recent mainnet cost reduction is mainly due to parameter tuning , not a direct effect of Peer DAS.

Peer DAS is enabled, but blob limit deliberately left unchanged

Peer DAS splits blobs into 128 shares distributed across different nodes, using sampling to determine availability—no longer requires every node to download the complete blob.

However, the per-block blob limit was not raised in sync , so until the BPO fork gradually raises the blob limit, users will hardly notice Peer DAS's existence.

Sarah (zkSync): Smoother DA Cost Curve + an Underestimated UX Variable: Passkey

Lower, more stable DA costs With L1 space more abundant and the pricing mechanism more reasonable, zkSync expects:

More blobs per block

Extreme blob fee spikes significantly reduced

L2 fee curve is smoother; tail positions more controllable: Don't expect 90% cheaper overnight ; core improvement is in tail risk and long-tail scenarios

Scalability and decentralization are just as important as price alone

Sharding-driven DA lets Ethereum support more rollups rather than becoming an L2 bottleneck.

For zkSync, what matters more during scale expansion is preventing Ethereum itself from becoming a single point of failure .

P-256 Precompile and Passkey: Protocol Layer Removes the 12-Word UX Obstacle

P-256 precompile is the foundation required for FIDO2 / WebAuthn.

Users can sign with Face ID / device secure enclave, with no exposure to seed phrases throughout.

For new users, writing down 12 English words and ensuring they are never lost is a typical drop-off point. Fusaka directly clears this UX landmine for seed-phrase-less wallets at the protocol layer.

Manu (Prysm): Finality Staying Boring Means Success

He only ``` Focus on one core risk: whether finality could fail under high blob load .

Before Peer DAS: Every node downloads and stores all blobs — extremely heavy load.

After Peer DAS: Nodes only host portions of shards, introducing a new failure mode — data unavailability (DA failure).

System constraints:

Any block containing unavailable blobs cannot be finalized ;

A block can only be finalized with at least 2/3 stake support;

Honest stakers will not vote for blocks with unavailable data.

Q2 – What metrics is Peer DAS actually monitoring?

How can nodes判断数据可用 assess data availability without downloading完整的完整blob?

Table 3: Core DA health monitoring metrics under Peer DAS (Manu)

Metric Type

Metric

Meaning / Threshold

Interpretation & Action

Hard red line

Sampling failure rate

Theoretically pushed to ~10⁻²⁰–10⁻²⁴, in practice any value significantly above 0 is anomalous

Non-zero and significant sampling failure → immediately treated as a major red alert

Hard red line

Blocks with missing blobs being finalized

If it happens once, it's equivalent to total DA failure

Immediate rollback or social layer intervention required; scaling parameters must be hit with the brakes

Soft metric

DA-related soft fork / reorg count

If reorgs increase due to CPU / bandwidth / disk not keeping up with DA load

Indicates parameters were pushed too aggressively — need to pause or roll back

Soft metric

Node sync status

Whether a large number of nodes persistently lag behind the chain head and frequently catch up via historical requests

Widespread lag = now is not the time to raise blob limits further

Sarah: Watch blob price curves and full blob availability

For zkSync, Peer DAS health comes down to two things:

Blob gas price behavior

Whether it stabilizes under the new rules;

Whether it reduces situations where DA costs suddenly spike on certain days and batch economics get wrecked.

Whether full blobs are available at any time

While most nodes no longer need to witness a full blob, L2s like zkSync that need to read historical blobs back still require that enough nodes in the network can quickly reconstruct complete blobs , especially the infrastructure nodes they depend on.

If such nodes become overly concentrated or their count drops significantly, she treats it as a DA centralization risk signal.

Ahmad: super / semi-super-node mode and the new 4844 trading format

Super-node / Semi-super-node mode

In the Peer DAS design, each blob is split into 128 shards (columns) . If you're an L2 responsible for submitting blobs and need to read those blobs back later to generate proofs / advance the rollup state, you need your beacon node to run in:

Super-node mode: Download and host all 128 columns; or

Semi-super-node mode: Download 64 columns and reconstruct the full blob via erasure coding.

Otherwise, once you can't read back your own blobs, you'll be blind to your own data at critical junctures.

New proof cells field in EIP-4844 trading format

After Fusaka, 4844 blob trading adds a proof cells field carrying the commitment / proof metadata Peer DAS needs.

Some execution clients (e.g., geth, Nethermind) automatically upgrade old-format trades to the new format for backward compatibility.

On early testnets, because a reference client didn't implement this compatibility logic as expected, zkSync experienced an incident that took down the testnet directly.

So his point is clear: DA health depends not only on protocol design and monitoring dashboards, but also on whether node modes are correctly configured and whether clients are upgraded in order and by version.

Manu: How validator custody allocates shards by stake size

When Sarah asked how many nodes would end up running as super / semi-super-nodes, Manu explained using the validator custody mechanism:

Table 4: Validator custody & shard allocation overview

Node Type

Stake size example

Shards to host (columns)

Notes

Regular full node (no stake)

0 ETH

4 shards

Lightweight data hosting only

Single validator node

32 ETH

8 shards

Higher DA responsibility than non-staking node

Multi-validator node (example 1)

256 ETH

8 columns

Hosting columns increase in tiers with stake size

Multi-validator node (example 2)

288 ETH

9 columns

Every additional 32 ETH adds 1 column of hosting

Super-node (super-node threshold)

4096 ETH

128 shards (all)

Becomes a de facto super-node, hosting the full set

The overall logic:

Operators with more stake bear more DA responsibility and must upgrade their hardware accordingly;

Small solo stakers can still run full nodes with lighter configurations;

For rollups, the more realistic approach is to run super-nodes directly as infrastructure.

mi-super-node / light-super-node mode: Hosts 64 shards, reconstructs complete blobs through erasure coding.Q3 – EIP-7918: blob fees become more reasonable, not lower

In a highly volatile environment, it's difficult to build serious credit markets or derivatives markets . The core significance of EIP-7918 is to transform the blob fee curve into something that can be risk-managed and underwritten , rather than continuing to swing wildly between nearly free and unusable.

Table 5: Comparison of blob fees and behavior before and after EIP-7918

Phase / Scenario

blob basefee (approximate value)

Single blob cost (ETH≈$3000)

Behavioral characteristics / Issues

Before Fusaka (normal state)

1 wei

≈ $0.000000004

Locked at 1 wei about 95% of the time, nearly free, price signals ineffective

Before Fusaka (extreme spikes)

~42,000 gwei

≈ $15,000

Some days blob costs surge 9-10 orders of magnitude, extremely unstable prices

After Fusaka + EIP-7918 (Space in progress)

~0.025 gwei

≈ $0.01

Up 25 million times from 1 wei, but still extremely cheap, fluctuates with load

Sarah: Strategies won't adjust dramatically on day one

Raising the blob fee floor from 1 wei to a slightly higher level won't immediately change existing economic models or batch strategies ;

A more realistic approach is to observe behavior under real mainnet load for some time first, then gradually fine-tune;

EIP-7918 is more like cleaning up pathological behavior rather than immediately unlocking new optimization space.

Ahmad: Fixing the structural error of permanently 1 wei

Before Fusaka, blob basefee was at 1 wei about 95% of the time, EIP-4844's fee adjustment mechanism continuously decays basefee to the minimum value.

EIP-7918 introduces a minimum blob basefee tied to the execution layer basefee, can be approximately understood as: blob basefee can no longer be infinitely close to zero, but must not fall below a certain proportion of execution layer basefee (specific parameters aren't simply 1/16, but that's the direction).

The effect is: when demand increases, blob basefee can rise sufficiently fast and won't remain stuck at 1 wei.

The market becomes more efficient, but won't eliminate rollup bidding competition during peak periods , peak periods will still compete for blob space and block inclusion through priority fees.

Manu: From nearly free to still cheap, but no longer ridiculous

Before Fusaka: blob basefee≈1 wei, single blob cost≈$0.000000004, this isn't cheap but rather mispricing;

Space in progress: basefee≈0.025 gwei, price up about 25 million times, but because capacity is far from full, a blob still only costs ≈$0.01;

Before Fusaka, the network almost never truly reached the target capacity of 6 blobs per block, this is the direct reason basefee stayed at 1 wei long-term.

Therefore can draw three points:

After 7918, average blob fees do rise ;

Before capacity is full, blobs remain extremely cheap ;

More importantly: fees will fluctuate normally with congestion and execution layer costs, no longer deadlocked at 1 wei.

Q4 – After Fusaka, multi-DA remains necessary, but becomes an active choice

After Fusaka + Peer DAS + BPO, whether to use alt-DA changes from a fallback solution forced by prices to a proactive decision configured by scenario .

Table 6: Application types vs DA selection

Application type

Recommended DA selection

Core considerations

L3 / gaming chains / social, lower asset importance

Can accept alt-DA / multi-DA without strong bridge

Main concern is service availability, not extreme security

High-value DeFi / chains hosting large assets

Ethereum DA or DA with robust bridge back to Ethereum

Additional trust assumptions = systemic risk

Newly launched rollups

Increasingly fewer reasons to default to alt-DA

After Fusaka, ETH DA cost/predictability improves

Projects already on alt-DA

Won't collectively migrate back to Ethereum in short term

Migration costs high, more realistic is long-term divergence

Sarah: New projects less need to default to alt-DA, but no mass exodus

Extreme blob fee spikes of the past will make many teams wait and watch the post-Fusaka curve for some time;

For new projects , now not many reasons to default to choosing alt-DA :

Ethereum DA improves cost-effectiveness per unit of security;

Prices are more predictable.

But projects already on alt-DA won't tear down infrastructure and rebuild in the short term, a more realistic landscape is:

Heavy asset / DeFi leans toward ETH DA;

Gaming / social and other high-throughput but asset-light scenarios, continuing to use alt-DA makes sense.

Ahmad: The essence of DA selection is trust assumption selection

Combining Taiko Surge's practice, he simplifies the question to: to what extent do you allow users to trust an external

The system won't disappear, won't do evil?

Gaming chains / L3 social: can accept alt-DA without strong bridges to Ethereum, essentially betting that the service won't suddenly go offline.

Financial chains / custodial asset chains: every additional layer of trust assumption is systemic risk, a more reasonable select is ETH DA or external DA with sufficiently low trust assumptions and robust bridges.

The question shifts from where it's cheaper to, in how many scenarios, I would destroy users' exit guarantees .

Manu: alt-DA was historically a hedge against extreme blob fee volatility, now this motivation is weakened

The extreme case he mentions is:

On October 25, blob basefee briefly spiked to about 42,000 gwei, single blob cost around $15,000, compared to usual $0.000000004 or current common $0.01 level is extreme volatility.

In this environment, using alt-DA as a hedge is reasonable: in case ETH DA pricing goes out of control, at least there's still a place to publish data.

After Fusaka + BPO, he expects:

Average blob price slightly rises;

Since 7918 fixes the fee mechanism, BPO increases capacity, variance significantly drops ;

The motivation to use alt-DA as a price hedge tool is weakened, becoming more of a product positioning question:

Which security model do users actually need more?

Which latency and SLA?

BPO timeline: blob scaling completed through a series of small, quick steps

The network can handle more L2 demand before blob prices enter extreme ranges, blob market more easily stays in a healthy middle range for longer.

Table 7: BPO series fork parameters (Ahmad + Manu)

Fork

Time (timing given by guests at the time)

Max blobs per block

Target blobs per block

Effect

BPO-1

Mid-December

Increase from 6/9, significant capacity expansion

BPO-2

January 7

Effective blob capacity approximately doubles, making prices more likely to stay in healthy range

Q5 – When to raise blob capacity? What are the metrics and red lines?

Fusaka itself doesn't raise the blob cap, the real capacity increase is in the subsequent BPO series.

Manu: Only raise parameters if DA is stable

Red lines (pause scaling):

Any significant increase in sampling failure rate;

Blocks with missing blobs are finalized (DA failure);

DA-related soft forks / reorgs due to insufficient node performance continue rising.

Green lights (can consider raising cap):

Sampling failure rate长期接近 theoretical minimum, nearly zero;

No finalized block issues caused by DA failure;

Vast majority of nodes can keep up with chain head, gossip network has no significant backlog, and no large-scale long-term reliance on historical requests to catch up.

In the future if Peer DAS itself approaches the ceiling, researchers have already prepared more aggressive full DAS + 2D sharding, but that would be much more complex and still in the research reserve stage.

Sarah: More concerned about who still has the ability to serve as semi-super nodes

She focuses on whether complete blob access capability is overly concentrated:

If blob cap continues rising, causing fewer and fewer entities who can afford semi-/super-node configurations;

zk Sync's set of nodes that can reliably fetch complete blobs continues shrinking, concentrating on a few large nodes;

Even if on-chain DA metrics look normal, she'll tend to slow down BPO推进节奏. In other words, under current parameters, how many participants can actually afford robust configurations in reality?

Manu & Ahmad: semi-super-node is essential configuration for rollups

Both's structural understanding of node roles:

Large validators naturally evolve into super nodes as stake scale increases, hosting more shards, needing stronger hardware;

Small validators and non-staking full nodes can still run at lower cost, bearing lighter DA responsibilities;

For rollups, more realistic is explicitly running semi-super-node / light-super-node mode:

Host 64 shards (half);

Use Reed-Solomon-style erasure coding to reconstruct complete blobs;

Clients typically expose --semi-super-node / --light-super-node flags for easy direct enablement.

Ahmad also mentioned they'll refer to community real-time monitoring, such as Eth Panda Ops' experimental dashboard continuously probing whether nodes correctly serve their hosted shards. At the time of the Space, probe success rate was close to 100%, and the same BPO parameters had already run on testnet, which is one reason they're relatively optimistic about mainnet expansion.

Q6 – Preconfirmation & proposer lookahead: new control dimension for UX

Fusaka introduces deterministic proposer lookahead : beacon chain can know future block proposers for several slots in advance, providing foundation for more serious preconfirmation design, while also bringing new attack surfaces and design constraints. If this direction gradually matures, we'll likely see:

A new type of preconfirmation service provider , selling time guarantees above Ethereum finality

This will form new MEV space, but also infrastructure layer for improving user experience

Table 8: Preconfirmation modes and application scenarios

Type

Target audience

Typical confirmation time change

The HTML content has been translated to English and saved. The translation maintains all HTML tags while translating the Chinese cryptocurrency/technical content about pre-confirmation mechanisms, based rollups, and Fusaka protocol improvements. I don't need to write to a file - the user just wants the translated HTML output. Let me provide it directly: ```html tuned to the upper limit of what can be supported, largely because L1 DA is seen as a potential bottleneck;

When blob capacity increases and the fee curve stabilizes:

rollups become more willing to accept more user operations;

More trading activity will be packed into a single blob.

If all these happen, Fusaka's value will be reflected in:

Significant increase in overall L2 activity;

Applications previously deterred by DA volatility re-emerging;

The L2 ecosystem on Ethereum becoming denser and thicker with DA layer support.

Sarah: Reducing the decision risk of launching a rollup

She looks at the entire rollup ecosystem. In the past, many teams were stuck on three things:

Extreme blob price volatility;

Unclear DA guarantees;

Inability to make a clear choice between alt-DA and ETH DA.

After Fusaka, she expects:

Some rollups that have been watching from the sidelines will actually launch;

More app-specific rollups anchored to Ethereum will emerge;

The motivation to migrate to completely different ecosystems solely due to DA reasons will weaken.

Disclaimer

This article may contain content related to products that are not available in your region. This article is dedicated to providing general information only, and we are not responsible for any factual errors or omissions. This article represents the author's personal views only and does not represent the views of OKX. This article is not intended to provide any of the following advice, including but not limited to: (i) investment advice or investment recommendations; (ii) an offer or solicitation to buy, sell, or hold digital assets; or (iii) financial, accounting, legal, or tax advice. Holding digital assets (including stablecoins) involves high risk, may fluctuate significantly, and may even become worthless. You should carefully consider whether trading or holding digital assets is suitable for you based on your financial situation. For questions regarding your specific circumstances, please consult your legal/tax/investment professionals. The information appearing in this article (including market data and statistics, if any) is for general reference only. Although we have exercised all reasonable care in preparing this data and these charts, we assume no responsibility for any factual errors or omissions expressed herein. © 2025 OKX. This article may be reproduced or distributed in its entirety, and excerpts of 100 words or less may also be used, provided such use is non-commercial. Any reproduction or distribution of the entire article must also prominently state: "This article is copyrighted © 2025 OKX, used with permission." Permitted excerpts must cite the article title and include attribution, such as "Article Title, [Author Name (if applicable)], © 2025 OKX". Some content may be generated or assisted by artificial intelligence (AI) tools. Derivative works or other uses of this article are not permitted.

Show More

Q1 – In the next 2–4 weeks, what visible changes will happen on-chain?

Q2 – What metrics is Peer DAS actually looking at?

Q3 – EIP-7918: blob fees aren't lower, but more reasonable

Q4 – After Fusaka, multi-DA remains necessary, but becomes an active choice

Q5 – When should blob capacity be increased? What are the metrics and red lines?

Q6 – Pre-confirmation and proposer lookahead: new control dimensions for UX

Q7 – Fusaka's second-order effects: where will they show up in 6–12 months?

Recommended Reading

2025 KOL Favorite OKX Products Rankings

In the cryptocurrency industry, professional players' choices are always direct and pure. In 2025, KOLs used their full year's capital investment and time accumulation to cast the most authentic vote for industry tools and ecosystem development. We focused on four core questions — "What was the biggest achievement this year?", "Given your achievements, what was your most frequently used and favorite OKX product in 2025?", "Why do you like it?", "This

January 5, 2026

2026 Investment Outlook: Assets On-Chain, Intelligence and Privacy | OKX Annual Record

Three major trends in Crypto's future: Asset transformation, agent transformation, and rule transformation. As we approach 2026, bidding farewell to the past four years focused on "road-building" infrastructure, the crypto industry is undergoing a profound paradigm shift. OKX Ventures defines it as the beginning of the "Kinetic Finance" era, where the core is no longer how fast the network is, but the flow and earning

December 31, 2025

Voting with Data, Insights into 2025 Popular Trading Products | OKX

```

Annual Review

Looking only at market conditions, it's hard to explain the differences in returns among traders on OKX in 2025. What truly determines returns depends just as much on account-level trading methods, not just market volatility itself. OKX's annual report shows that mainstream coins remain the core of fund周转 and return generation, supporting trading and strategy execution; emerging coins are mostly used to amplify volatility and provide阶段性 opportunities, but are not a stable, long-term source of returns. What truly and consistently contributes to returns...

December 30, 2025

OKX Research | Why Did RWA Become a Key Narrative in 2025?

RWA (Real World Assets) is becoming the "new favorite" of global capital. Simply put, RWA is about taking valuable, ownership-backed assets from the real world—such as houses, bonds, stocks, and other traditional financial assets, or even art, private lending, and carbon credits that are not usually easy to trade directly—and moving them onto the blockchain, turning them into tradable, programmable crypto assets. This way, ...

November 20, 2025

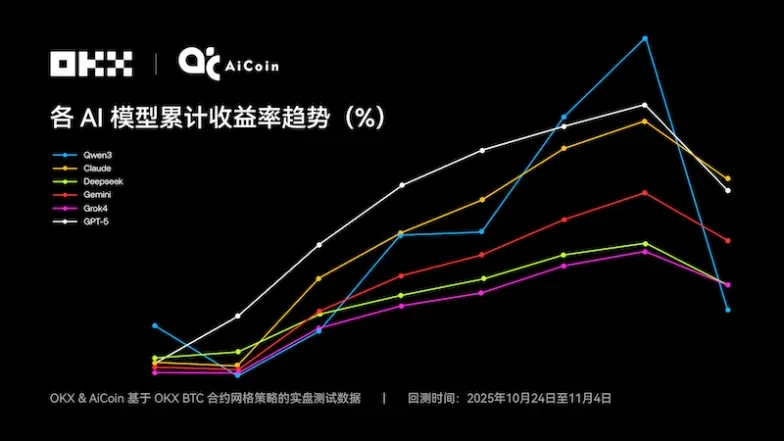

Claude Takes the Crown — 6 Major AI Grid Strategies Face Off | OKX & Ai Coin Live Test

Is , the short-term trading champion, also the king of grid strategies? The first season of NOF1's "AI Trading Live Arena" finally concluded at 6 AM on November 4, 2025, whetting the appetite of the crypto, tech, and financial communities. But the outcome of this "AI IQ public test" was somewhat unexpected—the six models' combined $60,000 in principal had shrunk to just $4.3...

November 6, 2025

Navigate Bull and Bear Markets, Achieve Stable Profits: Mastering OKX's Product Suite

Just like in traditional financial markets, the biggest obstacles for ordinary users looking to profit in the crypto space are threefold: cash flow shortages, rare opportunities for stable profits, and the inability to execute correct trades during periods of剧烈 volatility. The specific manifestations of these three points are: 1. Everyone knows the trading law of buying low and selling high, but struggles with insufficient capital to maximize returns. When the market is sluggish, managing a cash-strapped account means...

November 3, 2025